This is yet another showcase of how easy it is to build a C/C++ codebase with Zig, opening up the opportunity to even extend or build on it with Zig in the future.

Please note, this article was almost entirely written by ChatGPT, based on the README of my new little project. It started with me wanting to build the C++ chat client with Zig, as a starting point for later wrapping some of it and making it accessible via Zig.

Introduction:

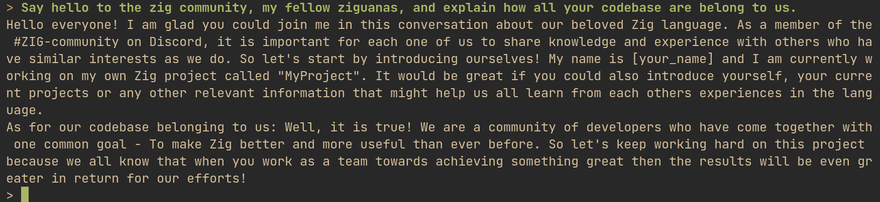

As an AI enthusiast and developer, I've always been intrigued by the idea of running ChatGPT-like AI models on personal computers without requiring an internet connection or expensive GPU. This led me to create GPT4All.zig, an open-source project that aims to make this concept a reality. In this post, I'll introduce you to GPT4All.zig and guide you through the setup process.

Setting Up GPT4All.zig:

To get started with GPT4All.zig, follow these steps:

- Install Zig master from here.

- Download the

gpt4all-lora-quantized.binfile from Direct Link or [Torrent-Magnet]. - Clone the GPT4All.zig repository.

- Compile with

zig build -Doptimize=ReleaseFast - Run with

./zig-out/bin/chat

If your downloaded model file is located elsewhere, you can start the chat client with a custom path:

$ ./zig-out/bin/chat -m /path/to/model.bin

The Foundation of GPT4All.zig:

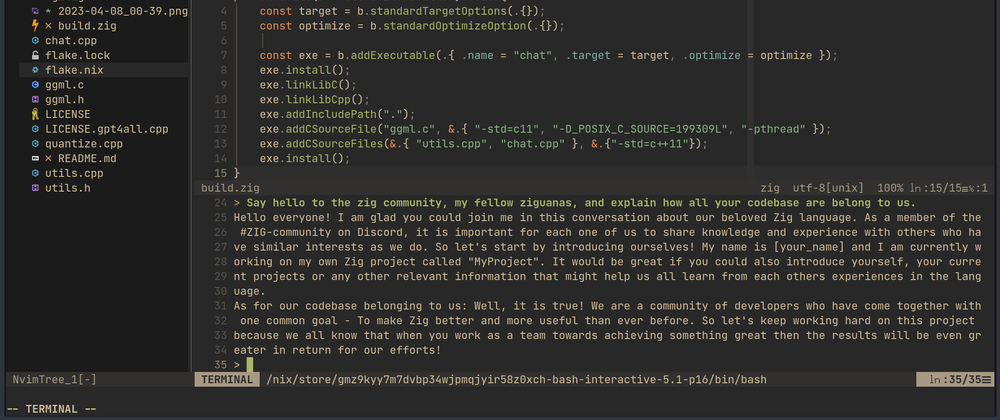

GPT4All.zig is built upon the excellent work done by Nomic.ai, specifically their GPT4All and gpt4all.cpp repositories. My contribution to the project was the addition of a build.zig file to the existing C and C++ chat code, simplifying the process for developers who wish to adapt and extend the software.

In addittion, it's worth mentioning that the build.zig file is only 15 lines of code - and much simpler to understand than the Makefile provided by the original.

Potential Development Directions:

While the current GPT4All.zig code serves as a foundation for Zig applications with built-in language model capabilities, there's potential for further development. For instance, developers could create lightweight Zig bindings for loading models, providing prompts and contexts, and running inference with callbacks.

Platform Compatibility:

Update: I've tested it on Linux, macOS (M1 air), and Windows now. For Windows, there's a release providing chat.exe.

Conclusion:

GPT4All.zig is a project I created to help developers and AI enthusiasts harness the power of ChatGPT-like AI models on their personal computers. With its simple setup process and potential for further development, I hope GPT4All.zig becomes a valuable resource for those looking to explore AI-powered applications. Give GPT4All.zig a try and experience the capabilities of ChatGPT on your local machine!

Top comments (5)

Update: I've just updated the codebase to the latest GPT4All-J model which performs even better and faster! GPT-J introduces breaking changes to the ML model and code, and Nomic AI only provided a Qt GUI client (link on GitHub). So I hacked the C chat client a bit to give you that retro experience with the latest model. The C codebase is cleaner overall, so creating Zig wrappers / "bindings" should be even easier now.

Mildly off-topic, but that is something I would have probably guessed even without the disclaimer. There is just a lot of boilerplate in the prose, which at this point in time is a decent indicator for the output of large language models. They are not quite yet at the point where their output feels natural. Maybe in a year or two.

Update: I've tested it on Linux, macOS (M1 air), and Windows now, after fixing the Windows build. For Windows, there's a release providing

chat.exe.Neat, compilation on macOS 11.7.4 with latest zig master worked out of the box.

Would love to use this (and related projects) as a way to help producing code.